Written by Chris Goodell, P.E., D. WRE | WEST Consultants

Copyright © RASModel.com. 2009. All rights reserved.

What does this mean? RAS allows you to enter in values (stations, elevations, etc.) out to many many decimal places. I believe most input variables use single-precision floating point numbers, some use double-precision. Although you may see an automatic rounding of numbers throughout, the program still carries around the added "precision" in its internal memory.

The results from a RAS model carry with it a fair amount of uncertainty. The magnitude of this uncertainty varies from model to model, but typically a sensitivity analysis can quanitfy this to some degree. I can however, assure you that even the simplest HEC-RAS model is not certain to within 0.01 ft for a water surface elevation. When you factor in roughness values, the discretization of coninuous reaches into finite cross sections, station-elevation approximations of continuous cross sections, ineffective flow approximations, all the different coefficients used, the use of Manning's equations for non-uniform flow conditions, and quite frankly, the numerical solution schemes used (both steady and unsteady), all of the sudden you may not feel all that confident about your results. That's the primary reason why I believe computational models (including HEC-RAS) serve us best when they are used as comparison tools-comparing one or more alternatives to a baseline condition using the same assumed uncertain parametes. Using RAS as a means of design should be considered very carefully with a complete understanding of the uncertainties involved.

Now here's an example of where we run into problems. FEMA requires us to evaulate floods using probabilistically derived flood events like the 500-year, 100-year, 50-year, 10-year, etc. What these return interval floods really mean are: in any given year there is a 0.2%, 1%, 2%, 10% chance (respectively) that a flood of that magnitude will occur. However, at the same time, all of the other input data (survey data, Manning's n values, coefficients, etc. are deterministically derived and carry with them a lot of uncertainty. In many cases, it's prudent to hedge to the conservative side to not have to deal with the uncertainty. However, when delineating flood plains, going conservative could mean someone's house is in the floodplain, when it really should not be. What this boils down to is, because we use deterministically derived input data for FEMA flood studies, a LOT of control falls in the hands of the modeler and the reviewer. You can say "The 100-year flood plain begins HERE." In reality, you should say there is some probability that the flood will occur here. But that's not the way it is set up. I think eventually we will do away with return interval floods, and all uncertain parameters will be assigned probabilities. Insteady of saying the "100-year flood will impact HERE", we'll say "There is a 1% chance that in any given year flood waters will reach HERE." Factored into that 1% probability is ALL of the uncertain input parameters, not just the flood discharge. The Corps of Engineers is doing this to some extent with levee work. In fact, they have mandated that all levee work will be evaluated using risk and uncertainty, rather than the traditional deterministic methods.

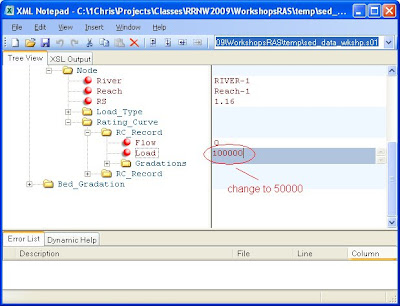

So...in getting back to the "precision" issue. One modeler can take all of his input data out to the 0.00001 ft (or cfs, or whatever). Another modeler can run the exact same model, only her input data will be appropriately rounded to the 0.01 ft (you could make the same example with Manning's n values-0.035 versus 0.04). The two models will produce different results. The differences in the results can be considered within the "uncertainty bounds" of the model. No problem, right? Well, with FEMA, a reproduction model cannot show differences. Furthermore, no-rise certificates mean "no rise"-no explanations allowed. What does this mean? Usually it means the modeler will identify uncertain parameters and tweak them within their uncertainty bounds to produce the results they are after. For example, let's say a model is showing a 0.01 ft rise 100 ft upstream of a bridge for a new bridge design. Most hydraulic engineers recognize that 0.01 ft doesn't really mean anything-it is well within the error tolerances of our model. However, we are not allowed to show a rise-at all. So, we tweak the ineffective flow triggers, or the pier coefficients, or whatever other uncertain parameters we can identify, within a realistic range, until we show 0.00 ft of rise. Is this the best way to run a study? I don't think so, but within the FEMA imposed regulation of analysing probablistic events with deterministic input parameters, it might be the only alternative. Hopefully FEMA will eventually switch to a complete risk and uncertainty-based analysis, so that we can avoid this "silliness".

Please post comments to this. I'd like to hear what others think about this topic.

This has all got me thinking about fishing off the gulf coast...